Walking a DNS Failure in a Zero Trust Environment

A cloud-brokered Zero Trust app loads, policy allows it, and identity succeeds—yet it still fails. This walkthrough shows how a single DNS record can bypass enforcement and how to diagnose it step-by-step.

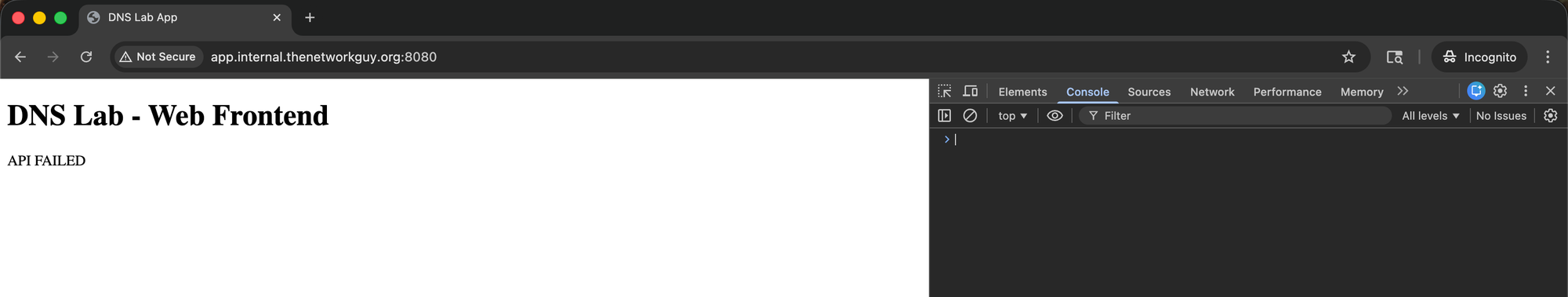

A user opens http://app.internal.thenetworkguy.org:8080. The page loads. Authentication succeeds. The access platform shows the request as allowed. But the application fails.

The reason: the application and its backend API use different hostnames. The application hostname resolves correctly. The API hostname does not.

In production, the API hostname should resolve to:

api.internal.thenetworkguy.org -> 10.30.12.110

That IP hosts the API service container.

During a recent infrastructure change, the DNS record was updated incorrectly. It now points to:

api.internal.thenetworkguy.org -> 10.30.12.210

Nothing is listening on port 5000 at that address. The API call times out. The page loads, but the application is broken.

Most troubleshooting starts with policy and identity. That makes sense — those are the systems you manage directly and the logs are readily available. But when DNS returns an answer that bypasses the enforcement path, policy cannot meaningfully apply to that request.

Here's how to diagnose it.

Step 1: What IP is DNS returning?

From the client:

$ dig +short api.internal.thenetworkguy.org

10.30.12.210

DNS resolves successfully. No NXDOMAIN. No SERVFAIL. The query returns a valid internal IP address. The client is using the corporate resolver at 192.168.1.10, which is expected. From DNS's perspective, everything is working.

DNS did not fail loudly. It returned a valid answer.

Step 2: Can we reach that IP?

$ curl -v --max-time 10 <http://api.internal.thenetworkguy.org:5000/health>

* Host api.internal.thenetworkguy.org:5000 was resolved.

* IPv4: 10.30.12.210

* Trying 10.30.12.210:5000...

* Connection timed out after 10002 milliseconds

curl: (28) Connection timed out after 10002 milliseconds

The hostname resolves. The client attempts to connect. The connection times out.

From the timeout alone, we can't definitively know whether nothing is listening on port 5000, the host is unreachable, or a firewall is dropping packets. But we know the client cannot establish a connection to the service at the returned IP.

The DNS answer is syntactically valid, but operationally wrong.

Step 3: Compare expected vs. actual

Expected behavior:

$ dig +short api.internal.thenetworkguy.org

10.30.12.110

$ curl --max-time 10 <http://api.internal.thenetworkguy.org:5000/health>

{"hostname":"888b409a2f78","status":"ok"}

Actual behavior:

$ dig +short api.internal.thenetworkguy.org

10.30.12.210

$ curl --max-time 10 <http://api.internal.thenetworkguy.org:5000/health>

curl: (28) Connection timed out after 10002 milliseconds

The only change is the returned IP address. The application hostname still resolves correctly to the Zero Trust platform's cloud proxy. The access policy still allows the user. Identity still evaluates successfully.

But the API hostname now resolves to an IP that does not host the service—and does not route through the enforcement point where policy is applied.

The Zero Trust platform enforces access at a cloud-based proxy. Traffic destined for internal applications must route through that proxy for policy evaluation. When DNS returns a direct internal IP address, the client attempts to connect directly. The traffic bypasses the enforcement point entirely. Policy never meaningfully applies because the request never reaches the enforcement point.

From the platform's perspective, access is allowed. From the user's perspective, the application is broken.

This was not a policy or identity issue. It was a single DNS record pointing to the wrong IP address.

The pattern is consistent:

- DNS resolution succeeds

- The returned IP is syntactically valid

- The service is not reachable at that IP

- Traffic never reaches the enforcement point

- Access logic is never meaningfully involved

When you reason through the request in order—resolution, reachability, enforcement—the failure becomes obvious. The diagnosis takes minutes instead of hours.

The fix is straightforward: correct the DNS record. The hard part is recognizing that DNS is involved at all when nothing in the logs says "DNS failure."

When DNS misdirects traffic, the logs usually point you somewhere else. These failures present as timeouts, partial application loads, or "allowed but broken" behavior. The key principle: if resolution directs traffic away from the enforcement path, policy becomes irrelevant.

In the next post, we'll look at split DNS and multi-view resolution—where the same hostname resolves differently depending on network location or resolver choice. Those scenarios are harder to detect and easier to misattribute. But the diagnostic approach remains the same.

Resolution shapes everything that follows.